Hence a natural and elegant idea to extend MAML: explicitly separate task-specific and task-independent components in the neural network. Another goal is to quickly identify a task based on little experience and adapt the policy accordingly. Task-specific and task-independent learningĪs mentioned earlier, one goal of meta-learning is to learn the underlying characteristics common to all tasks while recognising task-specific characteristics. Second, the gradient descent updates all the weights based on a few data points only.Firstly, updating all the weights requires computing a large gradient demanding a lot of memory and compute.

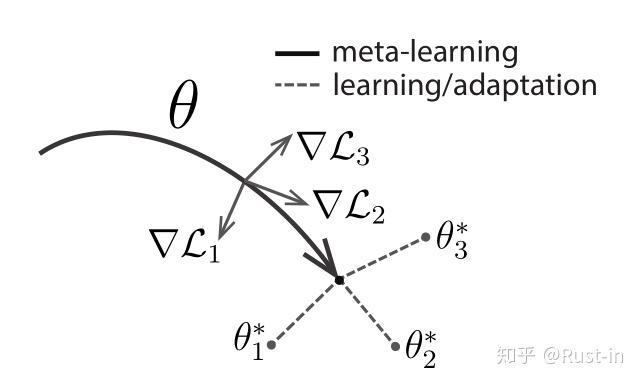

In particular, the need to do a full gradient descent step on all the weights suffers from two major defaults: MAML is a great algorithm, but there is room for improvement. GitHub Repository A Simple Extension: CAVIA This figure illustrates how FOMAML differs from MAML: It might be preferable to use it when the dimensions are prohibitively large and the second-order derivatives require too many resources. This algorithm is called first-order MAML (FOMAL). Surprisingly, if we omit the second-order term in matrix A and use the approximation A = I, we also obtain very good results. This is called the outer loop.Īs illustrated by the previous figure, MAML requires second-order derivatives to compute the gradient at θ. Second, for the same tasks, we sample multiple trajectories from the updated parameters θ’ and backpropagate to θ the gradient of the policy objective.Firstly, for a given set of tasks, we sample multiple trajectories using θ and update the parameter using one (or multiple) gradient step(s) of the policy gradient objective.The meta-training algorithm is divided into two parts: We are looking for a pretrained parameter that can reach near-optimal parameters for every task in one (or a few) gradient step(s)įor every task i=1,2,3, the parameter θ should reach a near-optimal parameter θ *. The figure below illustrates how MAML should work at meta-test time. Considering that we have found a good initialisation parameter θ from which we can perform efficient one-shot adaptation, and given a new task, the new parameter θ’, obtained by gradient descent, should achieve a good performance on the new task. Let’s see how we can do that! MAML Algorithm Meta-testing goalīefore explaining how to train MAML ( meta-training), let’s define what we would expect at meta-test time. Can we directly optimise the initialisation parameter to guarantee good adaptation? In MAML, the parameters of the model are explicitly trained to provide a high performance after fine-tuning via gradient descent. Hence, the policy should optimise the objectiveĪgain, a major flaw of this method is that the initial parameter θ is optimised to maximise the average return over all tasks but does not guarantee any fast adaptation. train an optimal policy over all these tasks before doing a fine-tuning adaptation. Therefore, another method might be to do multi-task learning, i.e. Moreover, in meta-learning, we have a distribution of meta-training tasks instead of a single task. However, these methods still require a large amount of data and are not suitable for fast few-shot adaptation. fine-tuning of a ResNet trained on ImageNet). The first natural way to learn a new task is to use transfer learning via fine-tuning (e.g. Transfer Learning and Multi-Task Learning From fine-tuning to MAML With MAML, you can train agents that quickly adapt in almost any dense-reward environment.

In this post, we introduce our first Meta-RL algorithm: MAML (Model-Agnostic Meta-Learning for Fast Adaptation of Deep Networks). Previous post: A simple introduction to Meta-Reinforcement Learning At meta-testing, we apply this algorithm to learn a near-optimal policy.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed